Is VR the future of remote meetings?

If there is one thing that we have all learned from lockdown, it’s that Zoom-type meetings are exhausting. While the theory is the same as your standard team briefing, in practice it leaves you drained. So, while the future of work might be remote, the future of working is not the videoconference of today. But what’s the alternative?

Why is the current format of videoconferencing so tiring?

Much of the issue with video meetings is the lack of naturalistic cues, together with the feeling of being observed all the time. During in-person communication, our brains use the 7-38-55 rule to decipher the meaning behind what’s being said. Seven per cent verbal, 38 per cent tone of voice and 55 per cent body language. Video calls take away most body language cues, but because the person is still visible your brain still tries to compute that non-verbal language. It means that you’re working harder, trying to achieve the impossible. This impacts data retention and can lead to participants feeling unnecessarily tired.

Attempts have been made during the pandemic to try to change the current 2D imagery of video meetings and make it more accessible. Teams “Together” mode is an example. But these endeavours don’t resolve the underlying problems of “presence”.

At the least part of the answer could lie in the growing world of VR gaming.

How can VR gaming help improve the future of videoconferencing?

In VR gaming, the action is driven by the user’s actions, usually through a gamepad or keyboard. The visual representation of the person is an avatar. This allows the human operator to have a degree of distance from their online presence. So, if they need to scratch their nose, the avatar is not mimicking them. On a video call, all actions are immediately transmitted and seen by other participants. And that puts extra pressure on everyone involved.

But of course, meetings (at least most meetings) are not about shooting and fighting. This means that the way we interact will be very different, and will need a different type of control. And this is where the voice becomes so important.

To avoid unnecessary user actions, we want to be able to turn off the camera completely. We need the actions of the avatars to be driven by what the user is saying. Modern speech recognition and speech analysis techniques allow us to understand what is being said, and how it is being said. If the tone is light and friendly, the avatar will relax and smile. If the tone is aggressive, it may lean forwards to make a point. We can seat everyone naturally round a boardroom table, or we can all sit on the deck of a yacht to sip cocktails. The setting is irrelevant. The point is that it simplifies the information that our brains need to compute, preventing ‘Zoom fatigue’.

Room for improvement

Of course, VR can’t answer every problem. The downside of this type of experience is artificiality. In the VR world modelled on video games, we have to wear bulky headsets that completely cut us off from what is around us. This can be disorienting and uncomfortable. And while the scenarios might look like real life, they risk being either too unreal to be credible, or almost too lifelike to be comfortable.

The current form of videoconferencing is not sustainable. It’s not only exhausting, but it’s lonely too. Forty-six per cent of workers in the UK reported feelings of loneliness during the lockdown, according to Total Jobs. In a virtual scenario, there is not that feeling of being alone. By building a virtual world, we can interact more with others, and feel more included. But while a complete virtual boardroom may not be a viable solution for everyone, the answer could be to take an augmented or mixed reality approach to the issue. Anchoring participants and their avatars in their real-world scenarios, while removing the mental strain of indecipherable visual cues. An AR or VR approach provides a shared experience, since each participant is meeting with the avatars of the other participants in their own environment, whether that be their home office or sitting around the kitchen table. Here the technology adapts to accommodate the real-life environment of each meeting participant, removing the distraction of VR environments.

When it comes to AR/MR devices there are numerous options. There are the expensive Magic Leap One and Microsoft HoloLens 2 devices which while quality immersive devices require personal computing power and cost thousands of dollars. Alternatively, there are also the inexpensive AR cardboard options like Aryzon and Holokit that make use of the AR image from your mobile phone in split screen mode and a mirror to optically mix the AR assets with your field of view. The drawback of these devices (aside from the rough and ready look) is that the reflected AR content lacks intensity, and suffers in even moderately well lit environments. A promising new inexpensive device is Zapbox, which mounts your phone in a glasses cradle like the AR cardboard options but much more ergonomically. ZapBox offers better clarity for viewing the content in any light condition, because the user is viewing the AR/VR content directly via a lens placed over the phone’s screen. However, this reduces the resolution of the image, because first the screen is split into two for each eye. Then a reduced portion of each split is rendered with an image that is visible through each lens.

Since the user views the image directly from the screen, it can shorten the viewing time length because looking at the light source directly could fatigue the user faster than with the reflective cardboard options. Zapbox is not released yet so is a bit of an unknown quantity but it is interesting.

Mobile hardware is making giant strides with regard to pushing the development or AR technology. Recognising the possibilities of the AR use case early on the two main competitors Android and iOS both have software development kits, ARCore and ARKit respectively, backed by hardware for development of AR applications on recent mobile devices. For many applications, viewing AR content on the phone screen in mono AR mode (without split screen), while less immersive, is a viable option for the technology.

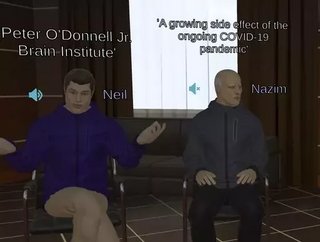

The technology we are developing is using both the Android and iOS developer kits to build an immersive meeting experience. The product, based on Unity Technology, we are calling iVXR (short for Intelligent Voice Extended Reality), can support mono single screen mode for a hand-held AR/VR meeting, but also supports the inexpensive cardboard options with split screen AR or VR options. We are using the Unity game engine as the basis of the technology, a proven solution for massively multiplayer applications, and this together with the inexpensive AR technology we prefer is intended to provide a useful application for a wider community of potential users. We have integrated our state-of-the-art speech and natural language understanding technology within iVXR to ensure that what is said is visible to the meeting participants and recorded in the meeting transcript made available to the participants, and that a permanent record of the meeting is available afterwards.

It is very likely as more and more AR applications are developed and AR hardware is continuing to be developed that the price point for AR headsets, which has come down, will continue to reduce as it did for VR headsets, for example, compare Oculus prices now to when it was first released.

AR technology continues to develop and is now moving quicker than ever, but it’s still an area that needs further exploration, but has much promise and I can see it being a big part of the near business future.

Nigel Cannings is CTO at Intelligent Voice